What if the next revolution in artificial intelligence came not from ever more powerful processors, but from chips that think like a human brain? That is the promise of neuromorphic computing, a technology that is finally leaving the laboratory to enter the real world in 2026.

What is a neuromorphic chip?

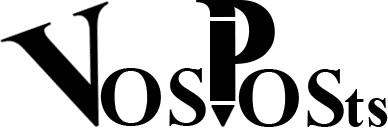

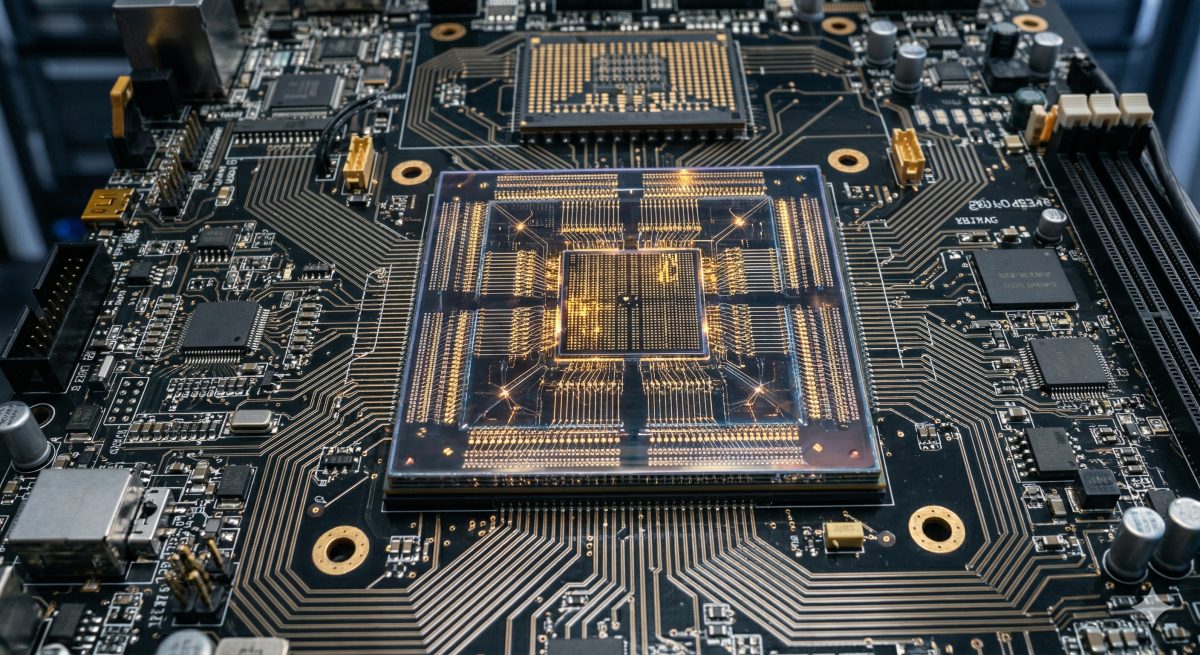

Unlike conventional processors (CPUs) or graphics cards (GPUs), a neuromorphic chip does not process information sequentially or in a massively parallel fashion. It draws directly from the architecture of the biological brain: artificial neurons communicate with each other through electrical impulses, exactly like synapses in our brain. Information is only processed when an event occurs, eliminating the enormous energy waste of traditional architectures that compute continuously, even when nothing is happening.

The result is spectacular: these chips can perform complex artificial intelligence tasks while consuming up to 1,000 times less energy than an equivalent GPU for real-time processing. A difference that could radically transform the global technology landscape.

Intel Loihi 3 and Hala Point: reaching one billion neurons

Intel is today the undisputed leader in this technology with its Loihi processor range. The third generation, Loihi 3, launched in 2026, pushes the limits even further. But it is the Hala Point system that impresses most: in a box no larger than a microwave oven, Intel has integrated 1,152 interconnected Loihi 2 processors, representing 1.15 billion neurons and 128 billion artificial synapses. All with a maximum power of only 2,600 watts, a fraction of what a conventional AI data center consumes.

This system is capable of achieving 20 quadrillion operations per second, a staggering figure that opens the door to applications previously unthinkable in terms of brain simulation and real-time sensory processing.

IBM NorthPole: another giant joins the dance

Intel is not alone in this field. IBM brought its NorthPole architecture into large-scale production in 2026, confirming that neuromorphic computing is no longer a laboratory curiosity but a fully industrial technology. NorthPole stands out with an approach where memory and computation are fused directly on the chip, eliminating the bottleneck that slows conventional processors. This architecture enables image processing and pattern recognition of unprecedented efficiency.

Concrete applications already in the field

One of the most striking demonstrations of 2026 is the quadruped robot ANYmal D Neuro. Equipped with a Loihi 3 chip, this industrial inspection robot operated for 72 hours continuously on a single charge, nine times longer than its predecessor equipped with GPUs. For companies deploying these robots in nuclear plants, pipelines, or hazardous zones, this autonomy is a game changer.

The automotive sector is not left behind. Mercedes-Benz and BMW are integrating neuromorphic vision systems in their vehicles to manage autonomous emergency braking with reaction times below one millisecond. Where a conventional GPU-based system takes a few tens of milliseconds to analyze a scene and react, a neuromorphic chip processes information almost instantaneously, like a biological reflex.

In healthcare, researchers are using neuromorphic chips to analyze brain signals in real time, opening the door to brain-machine interfaces that are more responsive and less energy-hungry. New-generation hearing and visual prostheses already benefit from this technology to offer an unprecedented quality of sensory processing.

Why it matters: the AI energy crisis

The rapid rise of artificial intelligence has a hidden cost that can no longer be ignored: its colossal energy consumption. The data centers that run large language models and generative AI systems already consume the equivalent of the electricity production of small countries. According to the International Energy Agency, energy demand from data centers could double by 2028.

Neuromorphic computing offers a way out. By consuming energy only when an event requiring processing occurs, these chips offer a radically more frugal computing model. For AI embedded in smartphones, cars, drones, or medical devices, this is a revolution: it is now possible to run sophisticated AI models directly on the device, without needing to connect to a remote server.

Challenges that remain

Despite these advances, neuromorphic computing faces several obstacles. The first is programming: the development tools for these chips are still immature compared to NVIDIA's CUDA ecosystem for GPUs. Intel has launched the Lava framework to facilitate programming of Loihi, but it will take time for the developer community to adopt it widely.

The second challenge is versatility. Neuromorphic chips excel in specific tasks such as pattern recognition, sensory processing, and real-time learning, but they are not designed to replace GPUs in training large language models. The future therefore likely lies in a hybrid architecture, where each type of processor is used where it excels.

Finally, scaling to industrial levels remains a challenge. Producing these chips in mass at a competitive cost is a challenge that chip manufacturers still need to overcome. But the massive investments by Intel, IBM, Samsung, and dozens of startups in this field suggest that prices will fall rapidly.

Toward a future inspired by nature

Neuromorphic computing illustrates a deep trend in current technology: rather than forcing raw power, it draws inspiration from nature to find more elegant and efficient solutions. The human brain, with its 86 billion neurons, consumes only 20 watts of electricity, less than a light bulb. Approaching even modestly that efficiency could transform not just computing, but also our relationship with energy and the environment.

In 2026, neuromorphic chips are no longer a distant promise. They are in robots, cars, medical devices. And they could well be, in a few years, in your smartphone.

Norwegian

Norwegian  French

French  English

English  Spanish

Spanish  Chinese

Chinese  Japanese

Japanese  Korean

Korean  Hindi

Hindi  German

German